- Company

- Management Policy & Strategy

- NEWMar. 04, 2021

Daihatsu Uses Artificial Intelligence (AI) to Enhance Competitiveness ~Commencing education program for AI personnel and also encouraging use of AI in the frontlines of development, production, office work, and other areas~

Mar. 04, 2021

Daihatsu Motor Co., Ltd.

Daihatsu Motor Co., Ltd. (hereinafter “Daihatsu”) announced today that it will seek to improve productivity and quality and promote the enhancement of competitiveness through the active use of artificial intelligence (AI). While the use of AI tools is already being pursued in a wide range of areas, such as the frontlines of development, production, and office work, it is conducting a variety of AI education programs so that anyone will be able to use AI in the future and aims to spread these programs companywide.

Specifically, Daihatsu has started AI awareness training for all staff positions since December 2020 to spread awareness at workplaces through learning basic knowledge related to AI. Furthermore, specialized training such as AI “dojo” will be conducted in the future for divisions that consider the use of even more sophisticated AI. Experts in AI will be progressively developed through such initiatives.

In addition, at the frontlines of production and development, there are also employees in who have undergone AI education in advance and the use of AI tools in various areas has started. At production frontlines, the use of AI tools has started in January 2021 centered on frontline employees. A system that automatically carries out object detection of parts being installed on vehicles and inspects their specifications was developed at Daihatsu’s Kyoto Plant, while a system that can detect the accuracy of stamping parts was developed at the Head (Ikeda) Plant. Going forward, together with improving detection accuracy and advancing the use of AI tools at other plants, frontline employees at plants will also learn AI knowledge so that they can actively carry out improvement activities in their immediate areas of work. Through these efforts, Daihatsu aims to improve productivity and quality.

In the field of research and development, AI tools have been introduced since 2020 at the frontlines of powertrain (such as engine) development, and initiatives were conducted to use machines in place of humans for sensory and abnormal sound inspections which could only be carried out by experienced employees in the past. By training AI with knocking (abnormal combustion) sound produced during measurement tests of engines being developed so that machines can automatically identify anomalies (see Reference 1 on next page), the operating rate of measurement facilities is improved, leading to an acceleration of development. The technology for recognizing knocking sound is also being further utilized to implement an AI tool (see Reference 2) at the Head (Ikeda) Plant that determines the result of the hammering test*1 conducted during the suspension inspection process.

At the frontlines of generic office work, an AI tool which can be used for the query response system for handling customer inquiries at the Customer Call Center has been developed and introduced. Going forward, its use will also be encouraged in other areas of office work.

Going forward, through the spread and use of AI companywide, Daihatsu will continue to promote MONODUKURI and KOTODUKURI that play an intimate part in the lives of its customers based on its “Light you up” approach.

※1 This is a method for the sensory detection of abnormal sound and such during the inspection process prior to shipment, where a hammer is used to tap on areas where vehicle suspension parts are installed.

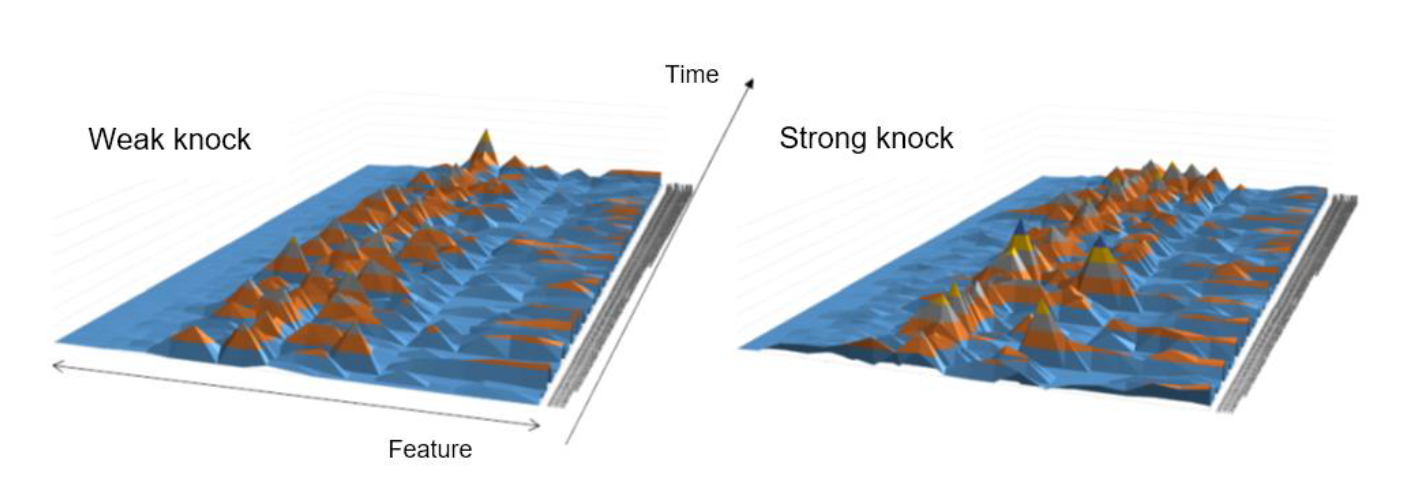

Reference 1:

Determination of knocking at engine development frontlines (conceptual image)

Below is the visualization of engine sound data when knocking occurred, based on which the AI tool determines if results are normal or if there is knocking.

Reference 2:

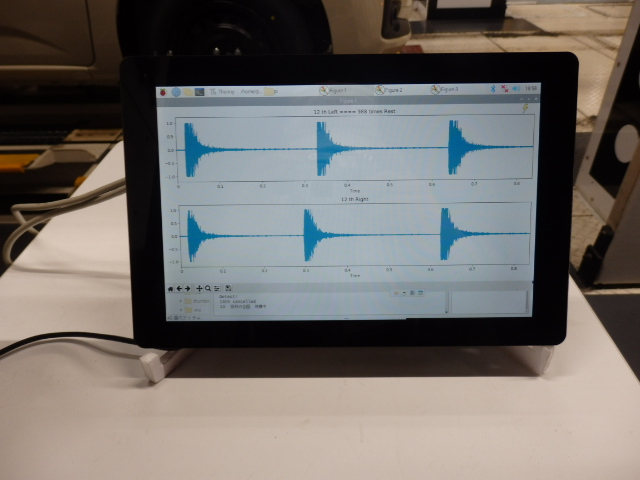

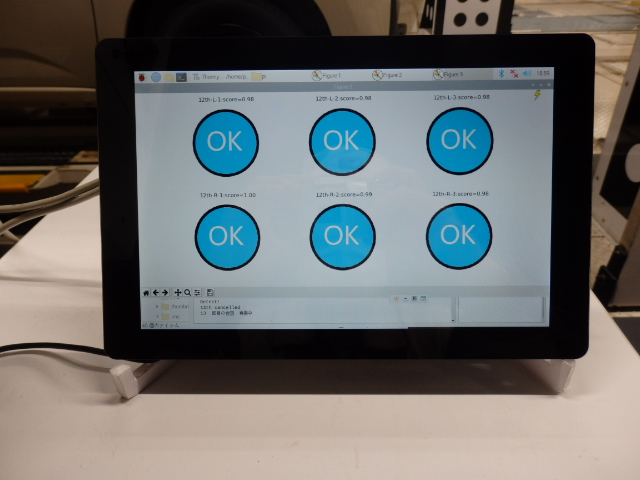

Conduct of hammering test on vehicle suspension parts at Head (Ikeda) Plant (conceptual image

(1) Microphone to collect tapping sound → (2) Hammering by inspection personnel → (3) Tapping sound waveforms converted into graphs →(4) Display of AI determination results

(1)

(1)

(2)

(2)

(3)

(3)

(4)

(4)